Online Supervised Training of Spaceborne Vision during Proximity Operations using Adaptive Kalman Filtering

Abstract

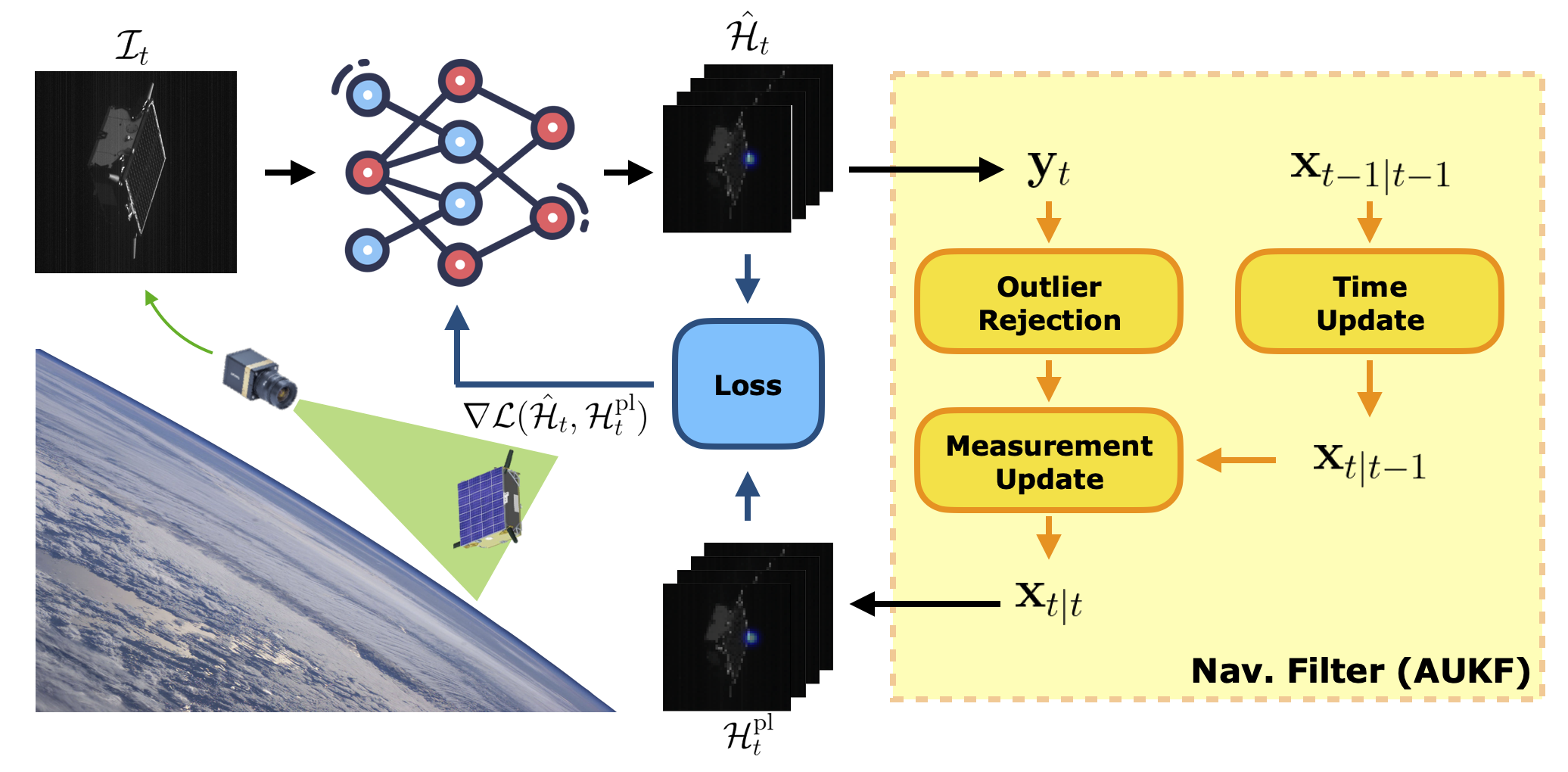

This work presents an Online Supervised Training (OST) method to enable robust vision-based navigation about a non-cooperative spacecraft. Spaceborne Neural Networks (NN) are susceptible to domain gap as they are primarily trained with synthetic images due to the inaccessibility of space. OST aims to close this gap by training a pose estimation NN online using incoming flight images during Rendezvous and Proximity Operations (RPO). The pseudo-labels are provided by an adaptive unscented Kalman filter where the NN is used in the loop as a measurement module. Specifically, the filter tracks the target's relative orbital and attitude motion, and its accuracy is ensured by robust on-ground training of the NN using only synthetic data. The experiments on real hardware-in-the-loop trajectory images show that OST can improve the NN performance on the target image domain given that OST is performed on images of the target viewed from a diverse set of directions during RPO.

Method

Datasets

Given the inaccessibility of the space environment for data collection, Neural Networks (NN) for spaceborne computer vision are generally trained offline using the synthetic images created with a graphics renderer such as OpenGL.

For example, the synthetic images of the SPEED+ dataset can be used to train an NN for monocular pose estimation of a non-cooperative spacecraft.

Then, without spaceborne images to validate its performance, the Hardware-In-the-Loop (HIL) images from the robotic testbed such as TRON at SLAB can be used as on-ground surrogates of otherwise unavailable flight images for robustness evaluation.

Likewise, the robustness of a navigation filter with the NN as its measurement model can be evaluated on HIL images of representative RPO scenarios such as those in the SHIRT dataset.

Online Supervised Training (OST)

In order to fully close the domain gap between synthetic and spaceborne imagery, we propose to additionally fine-tune the NN using the flight images available in space during RPO. This is done via supervised learning with pose pseudo-labels that derive from the navigation filter's a posteriori state estimates of the target's pose. In this work, the pseudo-labels are the heatmaps about locations of known surface keypoints of the target. For computational efficiency, OST is performed as one backpropagation per each flight image.

Results

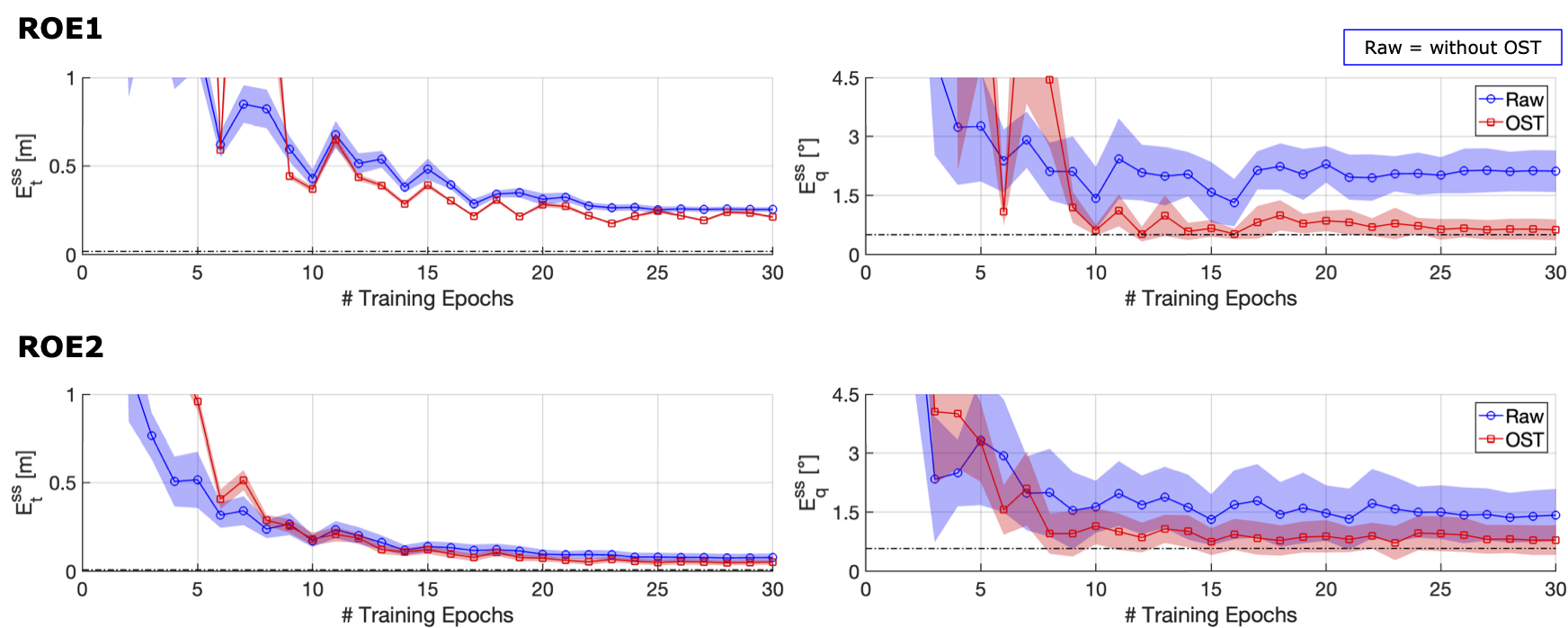

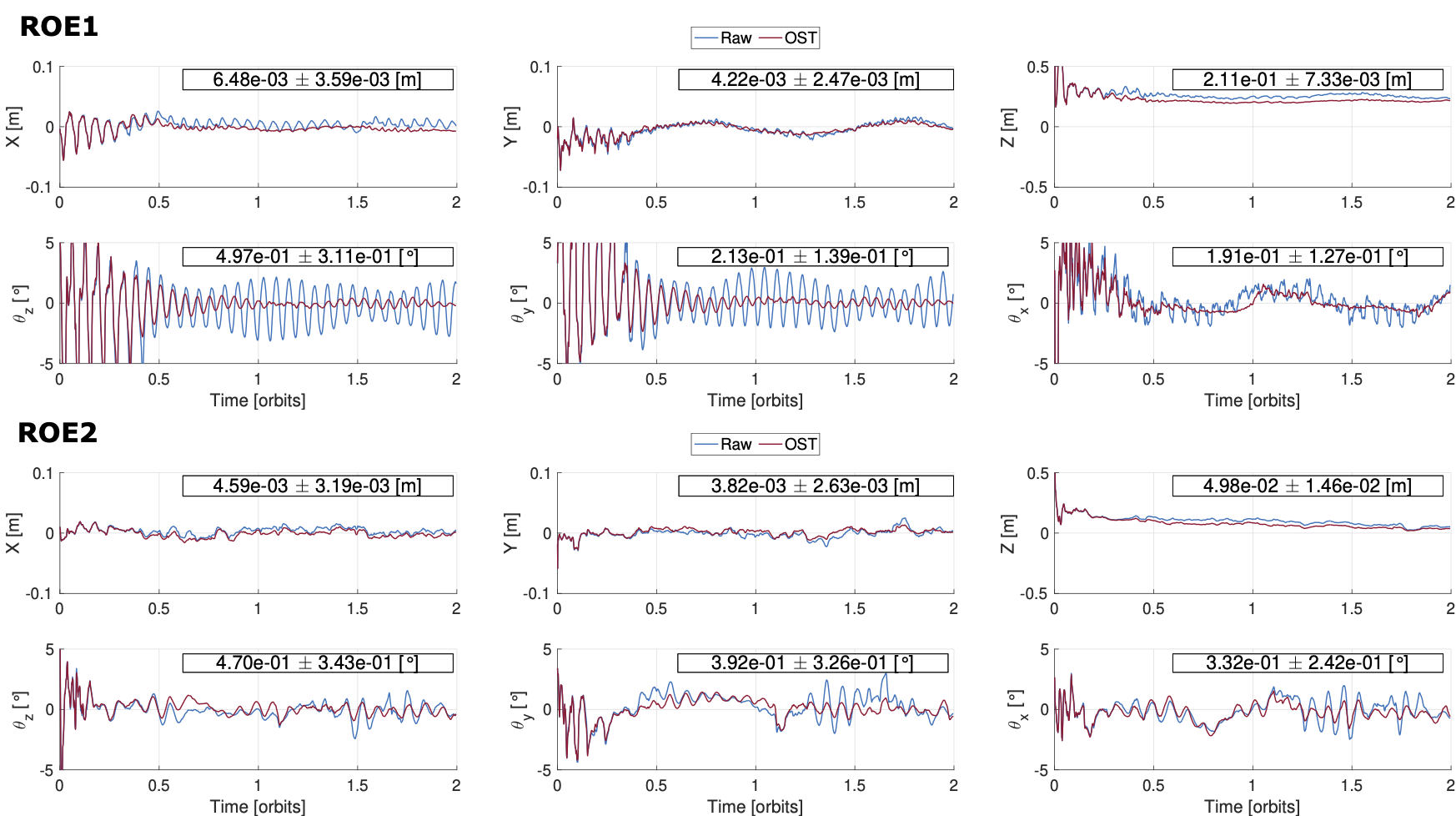

Different levels of domain gap are simulated by prematurely ending the training of a NN, and the filter's steady-state errors for translation and orientation components are shown. We can see that the NN trained for only 10 epochs out of a 30 epoch training schedule already improves the overall filter steady-state errors. Moreover, the orientation error is equivalent to that of the filter evaluated on synthetic images of the same trajectory, suggesting that OST fully closes the domain gap for orientation prediction.

The improvement of filter convergence is more pronounced in ROE1 which is a more difficult scenario as shown above.

Paper

Online Supervised Training of Spaceborne Vision during Proximity Operations using Adaptive Kalman Filtering

Tae Ha Park, Simone D'Amico

Please send feedback and questions to Tae Ha "Jeff" Park

Citation

@INPROCEEDINGS{park_2024_icra_ost,

author={Park, Tae Ha and D'Amico, Simone},

booktitle={2024 IEEE International Conference on Robotics and Automation (ICRA)},

title={Online Supervised Training of Spaceborne Vision during Proximity Operations using Adaptive Kalman Filtering},

year={2024},

pages={11744-11752},

doi={10.1109/ICRA57147.2024.10610138}

}

Acknowledgements

This work is supported by the US Space Force SpaceWERX Orbital Prime Small Business Technology Transfer (STTR) contract number FA8649-23-P-0560 awarded to TenOne Aerospace in collaboration with SLAB.

This page is created with the Instant NGP template.